Project Team Members:

Northwestern University

Northwestern University

Enabling High Performance Application I/O

for Scientific Data Management

Objectives:

Many scientific applications are constrained by the rate at which data can be moved on and off storage resources. The goal of this work is to provide software that enables scientific applications to more efficiently access available storage resources. This includes work in parallel file systems, optimizations to middleware such as MPI-IO implementations, and the creation of new high-level application programmer interfaces (APIs) designed with high-performance parallel access in mind.

Non-contiguousParallel I/O:

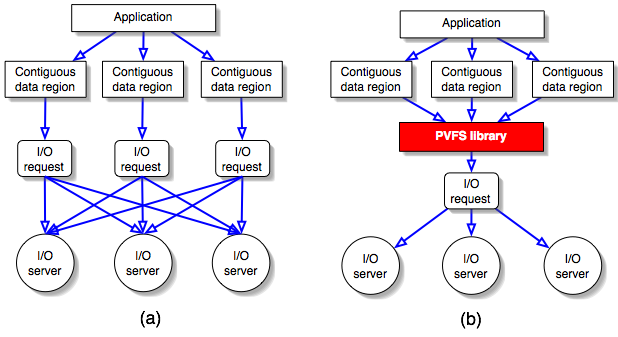

Scientific computing often requires noncontiguous access of small regions of data. Traditionally, parallel file systems perform multiple contiguous I/O operations to satisfy these types of requests, resulting in a large I/O request processing overhead. To enhance performance of noncontiguous I/O, we have created list I/O, a native version of noncontiguous I/O. We have used the Parallel Virtual File System (PVFS) to implement our ideas. Figure 1(a) depicts the traditional approach which uses multiple I/O requests for noncontiguous I/O. Figure 1(b) illustrates the design of our list I/O in which the actual I/O request has been reduced to a single one. Our research and experimentation shows that list I/O outperforms current noncontiguous I/O access methods in most I/O situations and can substantially enhance the performance of real-world scientific applications.

Figure 1. (a) Multiple I/O dataflow for noncontiguous I/O. In the multiple I/O approach, each noncontiguous data region requires a separate I/O request that the I/O servers must process. Each arrow represents a single request. (b) List I/O dataflow for noncontiguous I/O. Noncontiguous data regions are described in a single I/O request.

Parallel NetCDF:

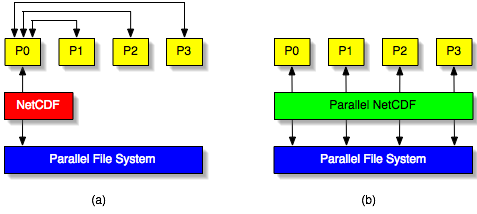

NetCDF is a popular package for storing data files in scientific applications. NetCDF consists of both an API and a portable file format. The API provides a consistent interface for access NetCDF files across multiple platforms, while the NetCDF file format guarantees data portability.

The NetCDF API provides a convenient mechanism for a single process to define and access vari-ables and attributes in a NetCDF file. However, it does not define a parallel access mechanism. Because of this, parallel applications operating on NetCDF files must serialize access. This is typically accomplished by shipping all data to and from a single process that performs NetCDF operations. This mode of access is both cumbersome to the application programmer and considerably slower than parallel access to the NetCDF file.

In this task we propose an alternative API for accessing NetCDF format files in a parallel application. This API allows all processes in a parallel application to access the NetCDF file simultaneously, which is more convenient and allows for higher performance. Figure 2 compares the data access from multiple processes between using NetCDF and parallel NetCDF.

Figure 2. Comparison of data access between using sequential netCDF and parallel netCDF. (a) Write operation is through one of the clients when using netCDF. (b) Parallel netCDF enables concurrent write to parallel file systems.

Related Links

- Parallel NetCDF project web page: software, documents, etc.

- Mailing list: parallel-netcdf@mcs.anl.gov (archive)

- Extreme Linux: Parallel NetCDF - an article from Linux Magazine

Publications:

- Avery Ching, Alok Choudhary, Wei-keng Liao, Robert Ross, and William Gropp. Efficient Structured Data Access in Parallel File Systems. In the International Journal of High Performance Computing and Networking, issue 3, 2004.

- Avery Ching, Alok Choudhary, Wei-keng Liao, Rob Ross, and William Gropp. ``Efficient Structured Data Access in Parallel File Systems'' In the Proceedings of Cluster 2003, December, 2003.

- Jianwei Li, Wei-keng Liao, Alok Choudhary, Robert Ross, Rajeev Thakur, William Gropp, Rob Latham, Andrew Siegel, Brad Gallagher, and Michael Zingale. ``Parallel netCDF: A Scientific High-Performance I/O Interface'' In the Proceedings of Supercomputing Conference, November, 2003.

- Avery Ching, Alok Choudhary, Kenin Coloma, Wei-keng Liao, Rob Ross, and William Gropp. ``Noncontiguous I/O through MPI-IO'' In the Proceedings of the 3rd IEEE/ACM International Symposium on Cluster Computing and the Grid, May, 2003.

- Avery Ching, Alok Choudhary, Wei-keng Liao, Rob Ross, and William Gropp. ``Noncontiguous I/O through PVFS'' in Proceeding of 2002 IEEE International Conference on Cluster Computing, September, 2002.

People:

- Northwestern University

- Argonne National Laboratory

Sponsor:

- Scientific Data Management Center (SDM) under the DOE program of Scientific Discovery through Advanced Computing (SciDAC)